Why do multi-agent llm systems fail?

Ever felt like AI automation’s promise is just a dream for your business? You’re not the only one. Many entrepreneurs spend a lot on complex systems, only to see them fail.

The truth is, why do multi-agent llm systems fail often because of a huge gap between what’s possible and what works. The hype says you’ll see instant results, but the real challenge is dealing with technical issues that are often ignored. By understanding these common problems, we help you create resilient and reliable workflows that actually work.

Key Takeaways

- The gap between AI hype and real-world performance is a common hurdle for business owners.

- Understanding technical limitations is the first step toward creating stable automation.

- Complex workflows often break due to poor planning rather than bad technology.

- Demystifying these issues allows you to avoid expensive and time-consuming technical dead ends.

- Empowerment comes from shifting your perspective toward informed, practical AI implementation.

The Architecture of Multi-Agent Systems

Artificial intelligence gets a big boost when you team up different models. By setting up multi-agent systems, you can tackle complex tasks that a single chatbot can’t handle.

Defining the Multi-Agent Framework

A multi-agent framework is like a digital team. Each member has a specific role, just like in a small business. There’s someone for marketing, finances, and customer support.

Each agent works in its own area but aims for the same goal. This setup makes things more efficient. Each part does its best job without trying to do everything.

Communication Protocols and Agent Autonomy

For these systems to work, they need clear rules for talking to each other. These protocols are like a language that lets agents share info and work together.

“True collaboration in artificial intelligence requires not just the ability to process information, but the capacity to negotiate and align goals across diverse, autonomous entities.”

Autonomy is key. Agents should make independent decisions based on their tasks. With clear protocols, they can handle uncertainty and solve problems on their own.

The Role of Deep Reinforcement Learning in Coordination

So, how do agents learn to work well together? Deep reinforcement learning is a big help here.

Agents get feedback on their actions, helping them improve their teamwork. With deep reinforcement learning, the system keeps getting better at working together. This makes the whole team stronger than any one agent.

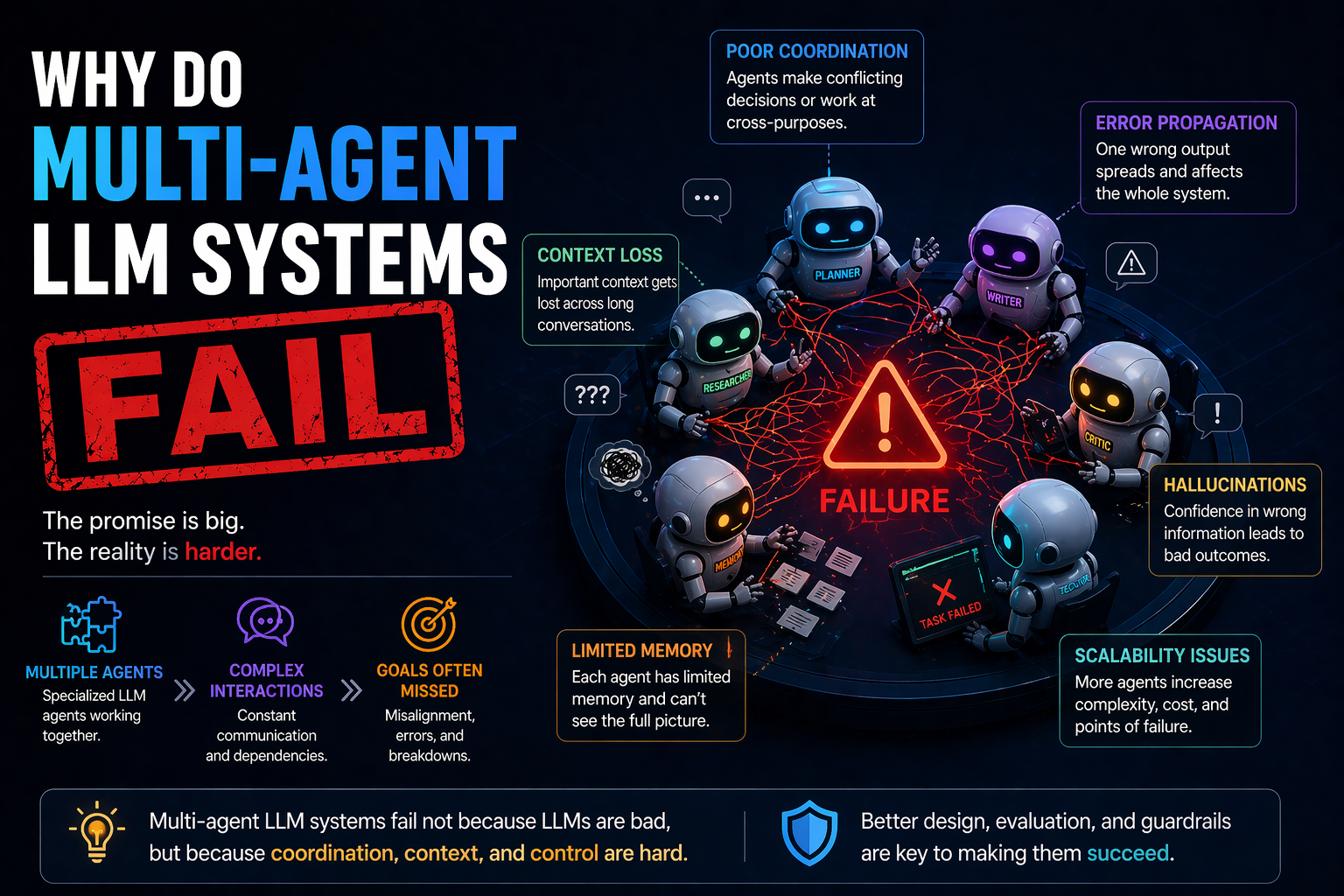

Common Reasons Why Do Multi-Agent LLM Systems Fail

We’ve studied over 1,600 traces across seven frameworks to find out why automation fails. This study, called MAST-Data, found 14 common failure modes. Knowing why do multi-agent llm systems fail is key for businesses to move from prototypes to reliable systems.

Context Window Limitations and Information Loss

AI agents have a context window, like short-term memory. When agents are linked, initial data often gets lost. This is a big limitation of llm in multi-agent systems that makes important details disappear.

When the context gets too full, agents might forget the main goal. They focus on new information instead. This makes their outputs correct but not relevant to the task.

The Problem of Recursive Error Propagation

Errors in a multi-agent system don’t stay isolated. A small mistake by the first agent can be seen as true by the next. This creates a recursive error propagation loop where errors grow fast.

- Input Corruption: Errors in initial data get worse with each agent.

- Feedback Loops: Agents can support each other’s mistakes.

- Validation Gaps: Without checks, errors reach the final output.

Inconsistent Reasoning Across Agent Boundaries

Different agents have different specializations, leading to conflicting logic. When they work together, they might understand instructions differently. This inconsistency is a big reason why do multi-agent llm systems fail to work together well.

| Failure Mode | Primary Impact | Risk Level |

|---|---|---|

| Context Truncation | Loss of instructions | High |

| Error Compounding | Inaccurate final data | Critical |

| Logic Drift | Goal misalignment | Medium |

By understanding these limitations of llm in multi-agent systems, you can set up better checks. Moving forward needs a smart way for agents to talk and check their state.

Communication Bottlenecks and Latency Issues

When AI agents talk more than work, your business pays the price. Communication overhead slows down efficiency in multi-agent systems. It causes unexpected delays and increases costs. Knowing the limitations of llm in multi-agent systems helps make your workflow faster and leaner.

Synchronous Versus Asynchronous Messaging Challenges

Deciding between synchronous and asynchronous communication is key. In a synchronous setup, agents wait for a response before moving on. This makes the workflow predictable but can lead to idle time if one agent is slow.

Asynchronous messaging lets agents send requests and keep working. It boosts overall speed but adds complexity in tracking states and managing dependencies. You must balance your business’s speed needs with the need for task order.

Token Overhead and Cost Escalation

Every message between agents uses tokens, affecting your costs. Verbose exchanges highlight the limitations of llm in multi-agent systems through higher API costs. This token overhead can quickly get out of hand.

To control costs, aim for concise communication. Reducing context in every exchange helps keep your budget stable. Efficiency in token use is as crucial as the quality of the output.

Managing Agent Handshakes and Protocol Complexity

Managing the “handshake” process is crucial for a smooth workflow. Complex protocols can lead to deadlocks where agents wait forever for signals. This is common when scaling beyond simple tasks.

Keep these interactions simple to ensure your system stays strong under pressure. Standardizing how agents request and acknowledge information avoids common pitfalls. The aim is to create seamless collaboration that feels natural, not a complex digital mess.

The Impact of Hallucination Cascades

One of the biggest factors affecting multi-agent system performance is the silent spread of errors called hallucination cascades. When you use a team of autonomous agents, you hope they work together well to solve tough problems. But, if the first agent gives wrong data, the others will use that wrong information.

This leads to a chain reaction where the final result gets further and further from reality. It’s key to understand this for anyone wanting to grow their AI without losing quality.

How One Agent’s Error Corrupts the Entire Workflow

In a multi-agent setup, agents often use each other’s work to do their tasks. If an agent makes a mistake or misreads a prompt, it spreads the error. The next agent thinks the input is right and works on it, making the mistake seem true.

By the end, the original mistake has grown bigger through many layers of interpretation. This can cause unreliable insights or broken customer experiences. These errors are not just random; they are built into the system.

Verifying Outputs in Multi-Step Agent Chains

To keep quality high, you must check every step in the process. Just looking at the final result is not enough to catch deep errors. You should see each agent’s work as a chance for mistakes to happen and need checking.

| Verification Method | Best For | Complexity |

|---|---|---|

| Cross-Checking | Fact-based tasks | Medium |

| Schema Validation | Data formatting | Low |

| Human-in-the-loop | High-stakes decisions | High |

Strategies for Implementing Guardrails and Validation

Creating strong guardrails is the best way to keep your business safe from AI mistakes. By setting clear limits, you make sure agents stay on track. Here are key strategies to make your system more reliable:

- Input Sanitization: Make agents check incoming data against a set format before processing.

- Self-Correction Loops: Teach agents to check their own work for logic before passing it on.

- External Tool Integration: Use search APIs or databases to check agents’ claims in real-time.

- Confidence Scoring: Ask agents to rate their confidence in their outputs, flagging low scores for human check.

Adding these safeguards makes your AI workflow strong and reliable. You don’t need to be a programmer to do this; just design your prompts and workflows with verification in mind.

Challenges in Task Decomposition and Planning

Deploying multiple agents can be tricky. The way you break down tasks is key to success. Advanced models can falter if tasks are unclear or the workflow is messy. Knowing these challenges in multi-agent systems is crucial for building strong automation.

Ambiguity in Goal Setting for Autonomous Agents

Autonomous agents need clear goals to work well. Vague goals can cause agents to act in confusing ways. This leads to wasted effort and wrong results. Make sure each agent knows its exact role clearly.

If an agent doesn’t know its limits, it might try to solve the wrong problems. This confusion is a big challenge in multi-agent systems that can cause projects to fail. Always give clear rules to keep agents focused.

Failure to Maintain Global State Consistency

A multi-agent system needs a single truth to stay in sync. If one agent updates data but another uses old info, the system will fail. Keeping a consistent global state is crucial for everyone to work together.

Without a shared memory or state manager, agents can go their separate ways. Use a shared database or a strong messaging system to track progress. This keeps all agents fully aligned with the project’s status.

Handling Unexpected Edge Cases in Complex Workflows

Complex tasks often have unexpected twists. When an agent faces an unknown situation, it might loop endlessly or freeze. You need to plan for these fail-safes to handle such cases.

By adding validation steps, you can catch errors early. This proactive approach helps create reliable workflows, even under stress.

Evaluating Performance Metrics in Multi-Agent Environments

When checking AI workflow performance, don’t just look at the end result. It’s not enough to see if it looks right at first. To truly understand multi-agent systems, you need to see the whole journey of your data.

Defining Success Beyond Simple Output Accuracy

Many business owners only check the final result. But, a correct answer that took a long time or used a lot of resources is a hidden cost. True success means looking at the time, resources, and logic used along the way.

Focus on how fast tasks are completed and how many times steps are repeated. If your agents keep redoing the same thing, your system isn’t as efficient as it could be. Efficiency is what makes a workflow healthy.

Monitoring Agent Interaction Logs for Bottlenecks

Your interaction logs are full of useful info. By looking at logs from models like GPT4, Claude 3, Qwen2.5, and CodeLlama, you can find where your multi-agent systems slow down. Look for patterns where one agent waits too long or where a prompt keeps causing errors.

By finding these bottlenecks, you can make your system better. You might find that one model is better at coding or that a certain prompt causes delays. Making changes based on data is how you get better performance.

Benchmarking Against Single-Agent Baselines

To show the value of your setup, compare it to a single-agent baseline. If your complex system doesn’t do much better than a single model, you might be overcomplicating things. Benchmarking helps you make the right choices for your system.

When you compare your multi-agent systems to simpler models, you see how much room for improvement there is. This comparison helps you know when to add more agents and when to keep it simple. Always aim for a balance where the extra complexity of multiple agents is worth it.

Optimizing Agent Collaboration and Memory

Getting your agents to share information is key to a strong AI workflow. When agents don’t share, they might repeat mistakes or miss important updates. This is a big problem for factors affecting multi-agent system performance. By having a shared memory, all agents can work with the full project context.

Implementing Shared Vector Databases for Context

A shared vector database is like a long-term memory for your agents. Instead of just using prompts, agents can look up past data in a database like Pinecone or Weaviate. This keeps their work consistent and prevents them from forgetting past decisions.

Having a central knowledge base helps agents avoid mistakes. It makes them work together better. This is a top solution for improving multi-agent llm systems. It turns your agents into a team that learns from each other.

Refining Prompt Engineering for Inter-Agent Communication

Good communication is key to avoid agents working against each other. You need to design clear prompts for each message. Using formats like JSON or XML helps agents understand instructions better.

Iterative testing of these prompts is crucial. It makes sure agents know their roles well. By improving how agents talk to each other, you make your workflow smoother and faster.

Balancing Agent Specialization Versus Generalization

Choosing between specialized or general agents is a big decision. Specialized agents are great at detailed tasks but might struggle with broader tasks. Generalist agents are flexible but might not be precise enough for complex tasks.

The best systems use a mix of both. A “manager” agent can handle big-picture planning, while specialized agents do specific tasks. This way, your system stays flexible and detailed at the same time.

| Optimization Strategy | Primary Benefit | Implementation Difficulty |

|---|---|---|

| Shared Vector Database | Context Consistency | Moderate |

| Structured Prompting | Reduced Miscommunication | Low |

| Hybrid Agent Roles | System Flexibility | High |

Troubleshooting and Debugging Complex Agent Interactions

When your automated workflows stop working, it’s crucial to understand what’s happening. Many business owners struggle with challenges in multi-agent systems because they see AI as a “black box.” By actively monitoring, you can quickly fix problems.

Tracing Agent Decision Paths in Real-Time

Real-time monitoring lets you see how agents process information. You don’t have to wait for a final output. Instead, you can watch the reasoning chain unfold.

Look for tools that offer a detailed log of agent actions. These logs are like a flight recorder for your automation. If an agent makes a mistake, you can find the exact cause.

Identifying Deadlocks in Agent Loops

Deadlocks happen when two agents get stuck in a circular conversation. This can waste your token budget and stall your workflow. You can spot these loops by looking for repetitive patterns in your logs.

To avoid deadlocks, consider these strategies:

- Set maximum turn limits for every agent interaction.

- Use a “supervisor” agent to break ties when two agents disagree.

- Introduce a random delay or “cooldown” period to reset the conversation flow.

Tools for Visualizing Multi-Agent Workflows

You don’t need to be a software engineer to manage your AI team. Many platforms offer visual dashboards that show how agents interact. These tools make overcoming failures in multi-agent systems easier by highlighting task connections.

The following table highlights common debugging tools and their primary benefits for non-technical users:

| Tool Category | Primary Benefit | Ease of Use |

|---|---|---|

| Visual Flow Builders | Drag-and-drop logic mapping | High |

| Interaction Loggers | Detailed text-based history | Medium |

| Performance Dashboards | Real-time cost and error tracking | High |

Using these visual aids, you can troubleshoot issues on your own. Remember, every error is a chance to improve your system. With the right approach, your automation will be a reliable and powerful asset for your business.

Conclusion

Mastering complex AI environments means looking beyond simple tasks. It’s about keeping the system healthy over time. You now know how to spot and fix issues.

Improving multi-agent LLM systems starts with testing over and over. Make sure everyone is talking clearly and validating steps. This keeps your agents on track with your goals.

Dealing with failures in multi-agent systems is a continuous process. See every mistake as a chance to get better. This approach turns problems into strengths for your brand.

Your journey to a more automated future is clear. Use these tips to create strong, reliable AI teams. Start small, watch your progress, and grow with confidence.

FAQ

Why do multi-agent LLM systems fail even when using advanced models like GPT-4 or Claude 3?

The MAST-Data research found 14 ways these systems can fail. Often, it’s because of context window limitations and recursive error propagation. When an agent misses key info or passes wrong data, the mistake grows, causing the system to fail. This happens when communication protocols can’t handle the token overhead and complexity of tasks.

What are the most significant challenges in multi-agent systems regarding error management?

The biggest challenge is the hallucination cascade. This happens when an agent’s mistake spreads through the system, messing up the global state consistency. Since each agent relies on the last one’s output, small errors can lead to big failures. To fix this, add validation layers and guardrails at each step to keep data correct.

How does deep reinforcement learning improve the coordination of autonomous agents?

A: Deep reinforcement learning is key to making multi-agent LLM systems better. It helps agents learn to work together better. By training on successful interactions, we can cut down on communication bottlenecks and latency issues. This way, the system learns to manage tasks more efficiently.

What are the specific limitations of LLM in multi-agent systems that business owners should know?

A big problem is the cost escalation due to high token overhead. These systems also struggle with ambiguity in goal setting. If the prompt is unclear, agents might get stuck in deadlocks in agent loops, passing the same wrong info back and forth.

Which factors affecting multi-agent system performance are the easiest to optimize?

Improving prompt engineering and using shared vector databases can make a big difference. These databases help agents keep context and reduce information loss. Also, balancing agent specialization and generalization boosts output accuracy.

How can I accurately measure the success of my multi-agent workflows?

Success isn’t just about the end result. You should monitor agent interaction logs to find bottlenecks. Compare your multi-agent setup to single-agent baselines to see if it’s worth it. If a single Claude 3 can do the job as well, your setup might be too complex. Use tools to visualize workflows and trace decision paths in real-time to spot problems.