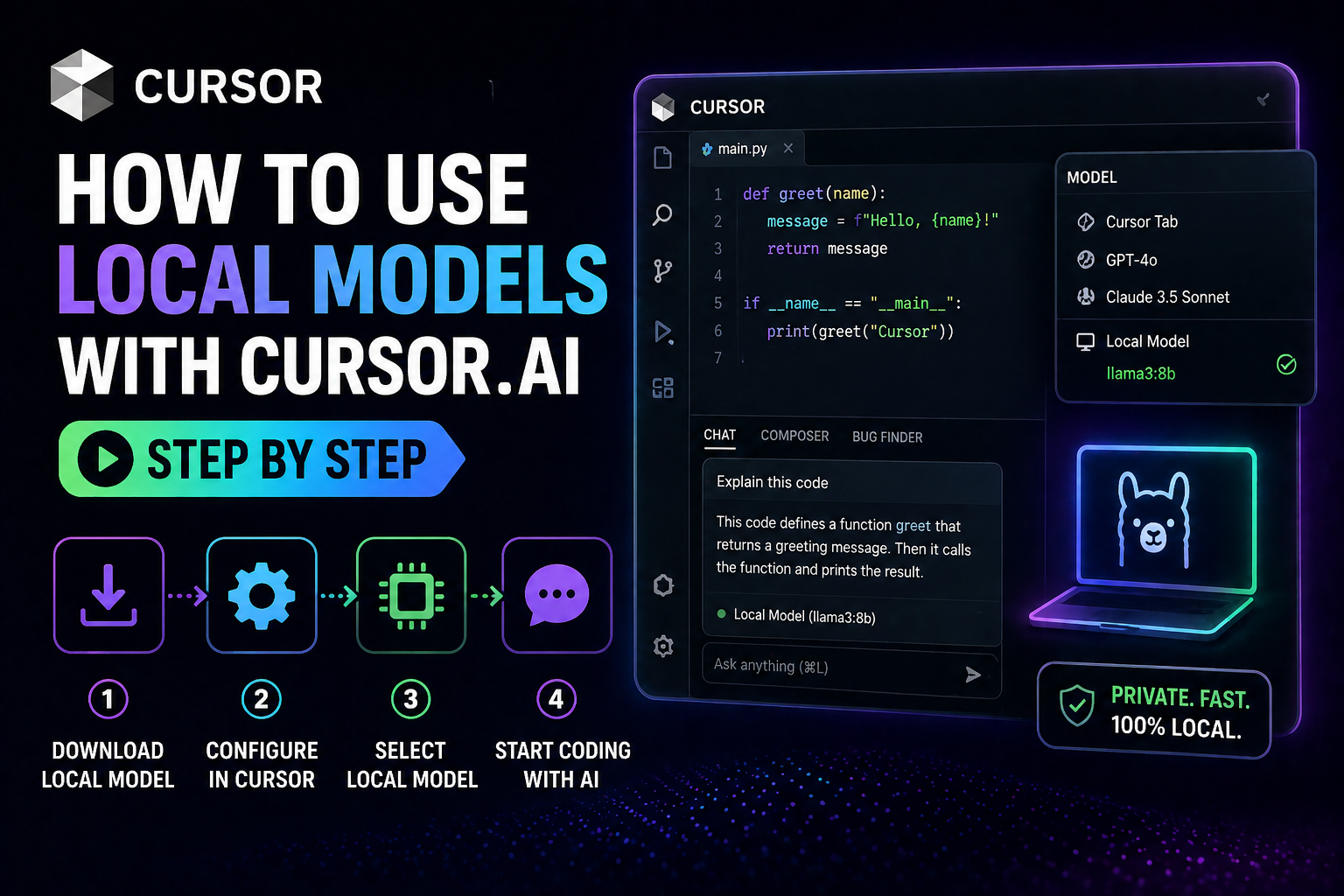

How to Use Local Models with Cursor.ai Step by Step

Ever thought about using a coding assistant without sending your data to the cloud? Many developers worry about sharing their work with outside servers. Reclaiming your privacy is now a real option for today’s work.

Learning how to use local models with cursor.ai lets you connect your machine to smart tools. This way, you keep your work safe and in control. This guide shows you how to set up your space to keep your code private.

Mastering how to use local models with cursor.ai helps you work faster and protect your ideas. We’ll make the technical steps clear so you can turn your editor into a private tool.

Key Takeaways

- Enhance data privacy by keeping your code on your own machine.

- Reduce dependency on cloud-based infrastructure for daily tasks.

- Gain full control over the specific intelligence versions you run.

- Understand the hardware requirements for smooth performance.

- Follow a simplified configuration process for immediate results.

Understanding the Benefits of Local AI Models in Cursor.ai

Starting your development journey means understanding local artificial intelligence. Running these systems on your own hardware means you don’t rely on external servers. This is key for developers who work with secret code and want to keep it safe offline.

Using local models gives you full control over your computer. You don’t have to worry about high API costs or server downtime. This freedom lets you work smoothly, no matter your internet connection.

The main perk is better data privacy. Your code stays on your machine, so it’s safe from hackers. This is a big win for freelancers and small businesses with secret client work.

Let’s look at how these two methods differ:

| Feature | Cloud-Based AI | Local AI Models |

|---|---|---|

| Data Privacy | Shared with provider | Fully private |

| Internet Need | Always required | Not required |

| Cost Structure | Subscription/Usage fees | Hardware investment |

| Latency | Depends on network | Depends on hardware |

Adding artificial intelligence to your work should make you feel powerful, not trapped. By using local models, you get the coding help you need while keeping your work safe. This way, your tools help your business without risking your security.

Prerequisites for Running Local Models

Starting your private AI journey means checking your hardware and setting up the right engine. Make sure your machine learning setup is ready for local inference. A solid foundation prevents crashes and keeps your work smooth.

Hardware Requirements for Optimal Performance

Running big language models locally needs lots of power. You’ll want at least 16GB of RAM, but 32GB is highly recommended for bigger models. A dedicated GPU with enough VRAM boosts your generation speeds, much better than using just your CPU.

Mac users with Apple Silicon M-series chips do great because of their unified memory. Windows or Linux users should have a modern processor and enough disk space for model files. These can be several gigabytes big.

Installing Ollama as Your Local Model Engine

Using Ollama is a smart choice because it makes running models like Llama 3 or DeepSeek easy. It’s a lightweight tool that lets your editor talk to local models without needing to know a lot about machine learning frameworks.

To start, go to the Ollama website and download the installer for your OS. After installing, the app runs in the background, ready for your commands. This streamlined approach means you spend less time setting up and more time creating.

Configuring Cursor.ai for Local Model Integration

Getting your local AI engine to work with your editor is easy once you know how. First, make sure your environment can handle custom API requests. Cursor.ai is powerful but needs a specific setup to work with your local machine.

The editor can’t connect to a standard localhost address. You need a secure HTTPS endpoint to link your local server to the app. This essential security measure keeps your data safe while you use local processing speed.

Accessing the Cursor Settings Menu

To start, open your editor and find the settings. Click the gear icon in the top right or use a keyboard shortcut. This will open the command palette where you can search for “Settings.”

With the menu open, you’ll see many options for your workflow. Look at the primary navigation sidebar for configuration categories. This area is your central hub for customizations, including AI provider settings.

Navigating to the Models Tab

In the settings menu, find the “Models” section. This is where you set up cursor.ai to work with different intelligence providers. By choosing this tab, you can input your local server details.

To ensure full compatibility, treat your local engine as an OpenAI-compatible provider. Just enter your secure HTTPS endpoint in the field and save. After that, cursor.ai will send requests to your local model, giving you a smooth and private coding experience.

Connecting Ollama to Your Cursor Environment

Getting a secure connection is the last step to use local AI in your workspace. You need to expose your local instance to the internet. Use a secure tunnel like ngrok or Cloudflare for this. This tunnel is key, letting cursor.ai reach your local hardware safely.

Verifying the Local API Endpoint

Before you finish the setup, check if your local server is working right. When your tunnel is up, you get a public URL for your Ollama instance. Try this URL in your browser to see if it works.

If it works, you’ll see a message from the server. This is very important for the editor to talk to your model smoothly. Make sure your tunnel is running before you move on.

Adding Custom Local Models to the Cursor Interface

With your URL checked, you’re set to connect the model to your editor. Go to the settings in cursor.ai and find the model section. Put your tunnel’s public URL in the API field there.

After saving, the editor will find your local models. Pick your model from the list to start using it. This easy integration lets you use local AI in your favorite code editor.

Selecting and Managing Your Local LLMs

Your choice of local models affects how well you do complex coding and predictive modeling. Picking the right model is about finding a balance. You need to match your hardware with your project’s needs. This way, your system stays fast and gives you great results.

Choosing Between Llama 3, Mistral, and Other Architectures

Each architecture has its own strengths. For example, Llama 3 is great for solving tough problems. Mistral is known for being fast and working well on everyday computers.

Think about these points when choosing:

- Hardware Constraints: Big models need more VRAM and memory.

- Task Complexity: Use big models for deep predictive modeling. Smaller models are better for simple tasks.

- Architecture Compatibility: Make sure your model fits with your local engine.

Managing Model Downloads and Storage

Good storage management keeps your computer tidy. Regularly clean out old models to free up space. A tidy workspace means your system runs smoothly.

When getting new local models, watch the names. Windows users need to match the model name exactly, like LM Studio. Wrong names can cause problems and slow you down.

Here’s how to manage storage:

- Keep your models in one place that’s easy to find.

- Use clear names for model versions and settings.

- Get rid of old files that you don’t use anymore.

How to Use Local Models with Cursor.ai for Coding Tasks

Learning how to use local models with cursor.ai makes your coding faster and more private. After setting it up, you can use AI assistants on your own computer. This way, your code stays on your device, but you get smart suggestions and help.

Initiating Chat with Local Models

Starting a chat with your local model is easy once you’re connected. Just open the chat and pick your local engine from the menu. Cursor keeps your data safe by sending requests through a secure tunnel to your computer.

Here are some tips for using your model well:

- Be specific: Tell your model what you’re working on clearly.

- Iterate: If the first answer isn’t right, ask again to get better results.

- Monitor resources: Watch your computer’s memory to make sure it can handle the model’s work.

Utilizing Local Models for Code Completion

You can also use your local model for code completion. It’s like having a smart partner who guesses what you’ll write next. Since it works on your computer, it’s much faster than cloud services.

To use local models with cursor.ai for code completion, let the model learn your code. As you type, it suggests functions, variables, and more. This keeps you focused and working smoothly, without waiting for the internet.

Optimizing Performance for Large Codebases

As your project expands, your local model needs adjustments to stay efficient. Working with big repositories can be tough on your system if not managed right. By controlling your settings, you keep your AI from slowing you down.

Adjusting Context Window Settings

The context window shows how much code your model can handle at once. If it’s too big, you might run out of memory and crash. You need to find the right balance for data analysis without using too much RAM.

Start with a medium window size and only increase it when needed. This keeps your system running smoothly while your model can still grasp complex code relationships.

Balancing Model Precision and Speed

To keep your development environment fast, focus on algorithm optimization. You don’t always need the most advanced model for good code suggestions. Sometimes, a simpler or quicker model is better for everyday tasks.

Here are some tips to keep your workflow smooth:

- Prioritize speed for everyday code tasks to keep your flow.

- Use high precision only for deep code refactoring.

- Regularly clear your cache to avoid memory issues during long sessions.

- Try different quantization levels to find the best for your hardware.

By tweaking these settings, you create a setup that grows with your business. A well-tuned local model offers the right mix of intelligence and agility for any coding task.

Troubleshooting Common Connection Issues

If your Cursor environment won’t talk to your local model, it’s probably a simple setup issue. These problems can be annoying, but they’re often easy to fix. Start by making sure your local setup is stable and accessible.

Resolving Port Conflicts and API Errors

Most connection problems come from using an insecure endpoint. Cursor needs an HTTPS-secured connection to talk to your local API. If you’re using plain HTTP, the request will be blocked for security reasons.

Also, make sure your local port isn’t blocked or used by something else. Here’s how to check:

- Make sure your local model engine is running on the right port.

- Make sure your URL starts with https://, not http://.

- Check your firewall settings to see if they’re blocking the port.

- Try accessing the API endpoint with a browser or cURL to see if it works outside of Cursor.

Debugging Model Loading Failures

Even if you connect, the model might not load or respond. This can happen if your system runs out of memory or the model file is damaged. Using algorithm optimization can help manage these issues better.

For persistent loading errors, try these steps:

- Check your hardware resources: Make sure your GPU or RAM can handle the model size you chose.

- Validate model files: If you think the model file was damaged during download, try downloading it again.

- Review logs: Look at the output logs of your local engine for error codes or missing dependencies.

- Restart the service: Sometimes, just restarting your local model engine can fix temporary issues.

Keeping your environment clean and secure helps your AI coding stay fast and reliable. Always double-check your setup when you update your software or change your model.

Advanced Techniques for Model Deployment

To get the most out of your hardware, try advanced deployment methods. These techniques let you fine-tune your setup for complex tasks. This way, you can work faster and more accurately.

Running Quantized Models for Lower Memory Usage

Quantization makes your models use less memory by reducing their precision. This lets them run smoothly on regular hardware without losing too much accuracy. It makes your models run faster and perform better.

This method is key for those with limited RAM. It lets you use bigger, more powerful models. This way, you can access advanced machine learning without needing expensive equipment.

| Model Type | Memory Usage | Performance |

|---|---|---|

| Standard (FP16) | High | Optimal |

| Quantized (4-bit) | Low | High Efficiency |

| Quantized (8-bit) | Medium | Balanced |

Integrating Custom System Prompts

Custom system prompts are like a guide for your model. They help it understand your specific needs. This ensures the AI works well with your coding style or business needs.

“The true power of local AI lies not just in the model itself, but in how effectively you guide its reasoning to solve your specific problems.”

— Anonymous Tech Strategist

These prompts are crucial for predictive modeling or software development. They help you get consistent results. This customization turns a general tool into a specialized helper that knows your machine learning needs.

Comparing Local Models Against Cloud-Based Alternatives

Cloud services are powerful, but local models have their own perks for coding. Choosing where to host your AI depends on your business needs and security.

Data Privacy and Security Advantages

Running AI on your own hardware gives you full control over your data. Cloud services send your code to external servers, which can be risky for secret projects.

Keeping your AI work internal means your secrets stay safe. This way, you avoid data leaks or unauthorized access to your code.

Latency and Cost Considerations

Local models perform well, thanks to a fast, powerful machine. Since processing happens locally, you skip the network delays cloud services have.

Also, running your own AI saves money in the long run. You only pay for the initial hardware, unlike cloud services that charge per use.

| Feature | Local Models | Cloud-Based AI |

|---|---|---|

| Data Privacy | High (On-device) | Variable (Server-side) |

| Latency | Low (Hardware dependent) | Medium (Network dependent) |

| Cost | Fixed (Hardware cost) | Variable (Usage-based) |

Local models aren’t the best for every task. They’re great for privacy and saving money, but cloud services can handle bigger tasks. A smart approach is to use both, depending on the task’s complexity.

Best Practices for Model Evaluation and Testing

Evaluation is key to making your coding assistant truly useful. When using artificial intelligence, it’s important to ensure the code is accurate and secure. Testing regularly helps keep your projects at high standards.

Benchmarking Local Model Responses

To see how well your model works, use a consistent benchmarking method. Start by running common coding tasks and compare the results. This data analysis helps you find where the model might falter, like in complex tasks or specific syntax.

- Create a library of “golden” test cases for your most common tasks.

- Measure the time taken for the model to generate a complete response.

- Compare the accuracy of different model versions to find the best fit for your needs.

Keeping track of these metrics in a table helps you see how your model deployment changes over time. This ensures your local environment stays stable as you update it.

| Metric | Target Goal | Priority |

|---|---|---|

| Code Accuracy | >90% Success | High |

| Response Latency | Medium | |

| Context Retention | High | High |

Iterative Refinement of AI-Generated Code

After collecting data, it’s time to refine your approach. View your interaction with the model as an iterative process. If the output isn’t perfect, tweak your prompts or add more context to guide the model.

By regularly checking the code from your tools, you improve future results. This dedication to refinement keeps your artificial intelligence tools effective in your development process. It helps you create better software with less effort.

Conclusion

Learning to use local models with cursor.ai is a smart move for your business. It’s affordable and lets you keep your data safe. You also get to work in a top-notch coding space.

This approach helps you create better tools without spending a lot on cloud services. It’s a game-changer for your business.

Keeping your models in check is crucial for success. Try out different setups to see what works best for your projects. This way, you can grow your business and stay competitive online.

Your work with cursor.ai is just beginning. Try new things and make your workflows better. Start building your own AI system today. It will take your productivity and creativity to the next level.

FAQ

What are the primary benefits of learning how to use local models with cursor.ai?

Using local models with cursor.ai means your data stays private. You also save money on subscription costs. This is because your data never leaves your machine.You can do sensitive work without worrying about others seeing it. Plus, you can keep working even when the internet is down.

What hardware benchmarks should I meet for a smooth machine learning experience?

For the best results, you need at least 16GB of RAM. But 32GB is even better. Also, a dedicated GPU with lots of VRAM is key.For Mac users, Apple Silicon chips work great. But if your hardware is not up to par, you’ll face slow performance.

Why can’t I connect Cursor directly to “localhost” on my machine?

Cursor.ai needs a secure HTTPS connection. But local Ollama instances usually use HTTP. So, you need tools like ngrok or Cloudflare Tunnels.These tools make a secure link. This lets the editor talk to your local AI setup safely.

How do I add custom local models like Llama 3 to the Cursor interface?

First, make sure your tunnel is set up. Then, go to Cursor Settings and find the Models tab. Enter your public tunnel URL there.It’s a good idea to add model tags, like Llama 3. This tells the editor which model to use for your tasks.

What is a quantized model, and why should I use one?

Quantized models are optimized LLMs that use less memory. They work on regular hardware that can’t handle full models. This is great for small businesses.They offer a good balance between performance and speed.

How can I ensure the quality of the code generated by my local setup?

Regularly test your model against known good outputs. Local models might not be as perfect as cloud ones. So, test small bits of code often.This way, you can refine your model and get better results.

Can local models handle complex data analysis and algorithm optimization?

Yes, they can. While Mistral is good for quick code, bigger models can do more. Just make sure your Cursor settings let the model see enough of your project.

What should I do if I encounter an API error or connection failure?

First, check if your Ollama service is running. Also, make sure your tunnel is active. Most problems come from using HTTP instead of HTTPS.If you’re still having trouble, look for port conflicts. Also, double-check that the model name in Cursor matches your local library.